*Commercializing smart voice products doesn‘t have to start from the underlying algorithms. This article focuses on how to leverage SISTC’s MEMS microphone modules and open-source voice platforms with mainstream embedded ecosystems (ESP32, Raspberry Pi, STM32, NVIDIA Jetson) for rapid secondary development, helping developers accelerate time‑to‑market for intelligent voice products.*

Introduction: Redefining the Development Model for Voice Products

In the past, building a product with far‑field voice recognition, sound source localization, and noise suppression required teams to invest in full‑stack development—from microphone array design and audio signal processing algorithms to low‑level hardware drivers. This “reinventing the wheel” approach not only consumed months of engineering effort but also demanded deep expertise in acoustics, signal processing, and embedded systems.

A new development model is now taking hold: secondary development. Instead of starting from scratch, developers can base their work on mature, modular microphone array smart voice front‑ends—quickly moving from prototype validation to production.

SISTC is an active participant in this trend. This technical blog focuses on the keyword “secondary development” and explores how to quickly build commercially competitive voice systems using SISTC‘s MEMS microphone arrays and open‑source voice platforms, combined with mainstream embedded environments (ESP32, Raspberry Pi, STM32, NVIDIA Jetson).

SISTC specializes in MEMS microphone arrays and open‑source voice platforms. Explore our products at Open Source Voice Platforms and Sensor Module Center.

1. Market Trends for MEMS Microphone Arrays and the Rise of Secondary Development

The MEMS microphone market is growing rapidly. According to industry reports, the global MEMS microphone market reached 2.92billionin2025andisprojectedtogrowto3.36 billion in 2026 (CAGR 15.2%). By 2030, the market is expected to reach $5.91 billion.

Key growth drivers:

- Explosion of wearables and IoT devices – each device uses 1–4 microphones, and consumers increasingly expect hands‑free voice control.

- AI‑driven voice interaction – edge AI voice assistants are expanding from smartphones to robots, smart homes, and automotive systems.

- Rising demand for offline voice processing – privacy concerns and low latency are pushing end‑side speech recognition solutions.

Behind these trends lies a significant shift: voice product development is moving from “in‑house R&D” to “secondary development.” Mature open‑source hardware platforms and standardized microphone array modules are lowering the barrier to entry, allowing even small teams to enter the smart voice space quickly.

Standalone voice secondary development platforms have become essential rapid‑prototyping tools during the product development phase. They enable developers to validate algorithms, test user experience, and refine interaction flows before final product molds are committed.

2. Why Secondary Development? Addressing Real Pain Points

Before diving into technical details, let‘s clarify how secondary development solves real‑world engineering challenges.

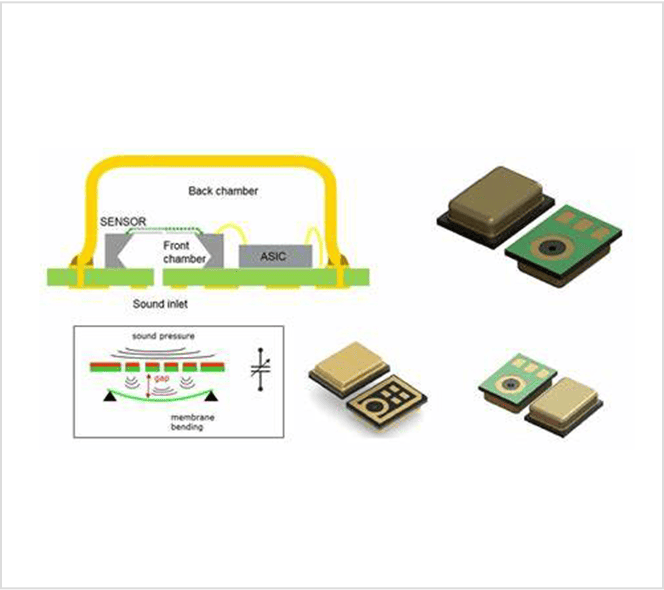

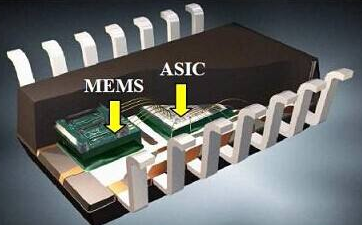

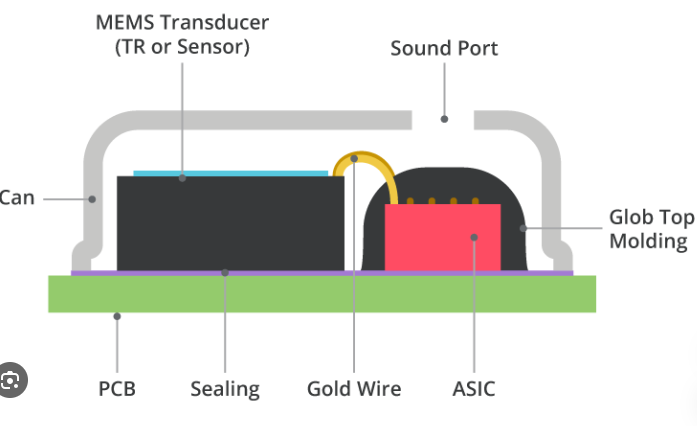

Pain Point 1: High Barrier to Acoustic Hardware Design

Designing a microphone array involves far more than placing a few microphones on a PCB – MEMS sensor selection, acoustic structure design, array geometry, and RF noise isolation all require specialized acoustics and hardware engineering expertise. This is a heavy burden for software‑focused teams or startups.

Secondary development solution: Use standard microphone array modules from suppliers like SISTC. These modules have already undergone acoustic validation and hardware optimization. Developers don‘t need to worry about internal acoustic details – they simply connect via standard interfaces (I²S, USB) to obtain high‑quality multi‑channel audio.

Pain Point 2: Long Algorithm Development and Validation Cycles

Developing core voice algorithms – beamforming, source localization, echo cancellation, noise suppression – often takes months of R&D. Performance tuning also heavily depends on extensive field test data.

Secondary development solution: Leverage open‑source algorithm frameworks and vendor‑provided software libraries. For example, STMicroelectronics‘ FP‑AUD‑SMARTMIC1 firmware package implements a complete MEMS microphone array processing application, including acquisition, beamforming, source localization, and acoustic echo cancellation, and is portable across different STM32 MCUs. Developers can do secondary development directly on top of this foundation.

Pain Point 3: Heavy Cross‑Platform Porting Workload

Smart voice products appear in diverse scenarios – smart speakers, conferencing systems, robots, automotive infotainment – each often requiring a different host platform (Raspberry Pi, Jetson, ESP32, STM32). Porting algorithms and drivers across platforms can be daunting.

Secondary development solution: Choose a modular voice platform with strong multi‑platform support. SISTC‘s open‑source voice platform natively supports ESP32 and USB/I²S interfaces, seamlessly integrating with Raspberry Pi, Jetson, and other mainstream environments. Developers can flexibly select the host platform without rewriting the audio capture driver from scratch.

3. Integration Guide: SISTC Microphone Arrays with Mainstream Platforms

Below we detail how to use SISTC microphone arrays with four popular embedded development platforms.

3.1 ESP32 Platform – Ideal for Entry‑Level Smart Voice Development

Ecosystem overview: The ESP32 series, with its integrated Wi‑Fi/Bluetooth, low power consumption, and rich software ecosystem, is a popular choice for entry‑level smart voice products.

Key development points:

- SISTC‘s open‑source voice platform natively supports ESP32 with complete driver‑layer interfaces.

- Developers can read I²S microphone array data directly via ESP‑IDF or Arduino framework.

- With free offline speech recognition libraries (e.g., ESP‑SR), keyword wake‑up and voice command recognition can run directly on the ESP32.

Typical applications: Smart home panels, wearable voice entry devices, simple voice‑controlled switches.

3.2 Raspberry Pi Platform – Agile Prototyping and Algorithm Validation

Ecosystem overview: As the world‘s most popular single‑board computer, Raspberry Pi has an active open‑source community and a complete voice processing software stack.

Secondary development value:

- Python ecosystem provides rich speech processing libraries (SpeechRecognition, openai‑whisper, etc.).

- SISTC voice platform connects seamlessly to Raspberry Pi via USB/I²S – no low‑level driver work required.

- Offline speech‑to‑text solutions (e.g., Whisper.cpp) run smoothly on Raspberry Pi 5.

Typical development path:

- Connect SISTC voice platform to Raspberry Pi via USB.

- Use ALSA/PulseAudio to recognize the audio input device.

- Write a Python loop to read microphone data.

- Integrate an open‑source STT engine (e.g., Vosk, Whisper) for speech recognition.

- Optionally connect to a conversational AI and TTS engine to complete the voice interaction loop.

Typical applications: Open‑source smart speakers, meeting transcription systems, robot voice interaction prototypes.

3.3 STM32 Platform – Robust Choice for Industrial and Mass Production

Ecosystem overview: STM32 MCUs are widely used in industrial control, automotive electronics, and consumer electronics mass production. ST provides a complete software toolchain for secondary development with MEMS microphone arrays.

Secondary development ecosystem highlights:

- FP‑AUD‑SMARTMIC1: STM32Cube function pack that implements a complete MEMS microphone array processing application – digital MEMS microphone acquisition, beamforming, source localization, and acoustic echo cancellation. Processed audio can be sent to a host via USB or output through a speaker.

- BlueCoin development kit (STEVAL‑BCNKT01V1): Integrates a 4‑digital MEMS microphone array, high‑performance STM32F446 (180 MHz MCU), and supports real‑time adaptive beamforming and source localization.

- STM32Cube expansion: FP‑AUD‑SMARTMIC1 is built on STM32Cube software technology, making it easy to port across different STM32 MCUs.

Value for developers:

The STM32 ecosystem provides a clear path from prototype validation to mass production. Teams don‘t need to re‑implement beamforming or source localization from scratch – they can build directly on ST‘s validated software libraries, dramatically shortening time‑to‑market.

Typical applications: Industrial acoustic monitoring equipment, smart voice control panels, automotive voice interaction modules.

3.4 NVIDIA Jetson Platform – The Compute Engine for Edge AI Voice

Ecosystem overview: NVIDIA Jetson (Orin NX, Orin Nano, etc.) is the leading platform for edge AI computing, especially suitable for running large language models and on‑device voice AI inference.

Development path:

- Connect SISTC voice platform (USB/I²S) to Jetson as the audio input front‑end.

- Leverage Jetson‘s GPU compute to run Whisper speech‑to‑text models locally for low‑latency, privacy‑preserving offline speech recognition.

- Combine with frameworks like Ollama to run large language models entirely on the Jetson, building a complete voice AI loop without cloud dependency.

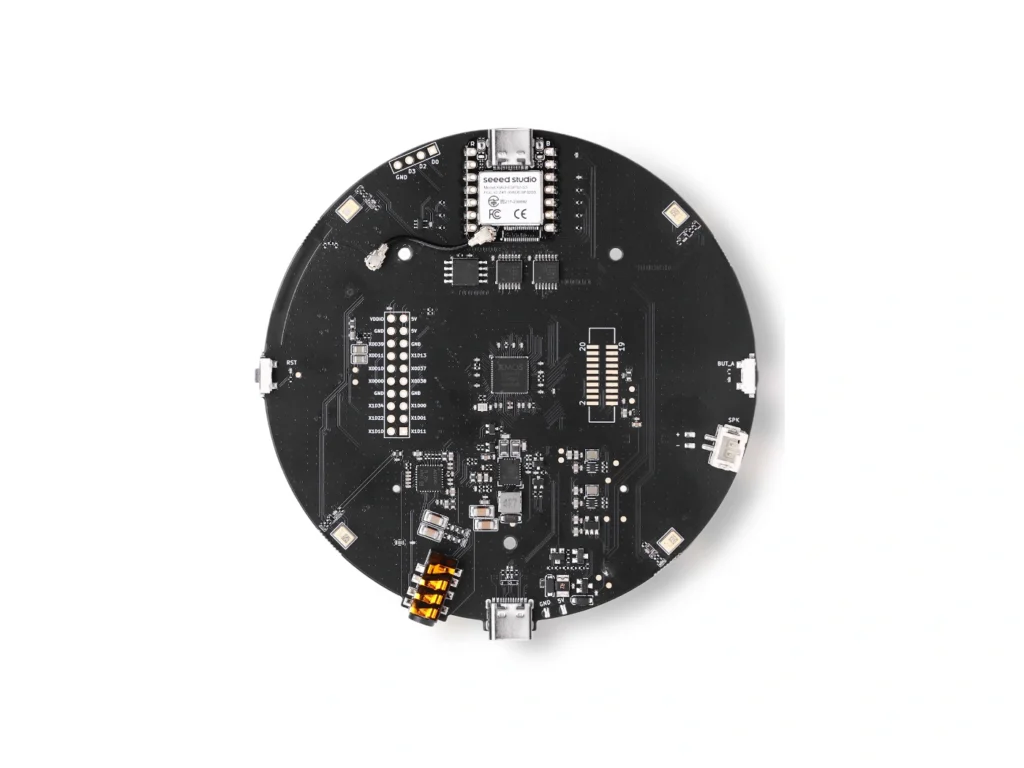

Secondary development example: Using a microphone array like ReSpeaker XVF3800 with Jetson AGX Orin, developers can build a fully local smart voice assistant, suitable for private offices, kiosks, meeting rooms, and other environments requiring offline voice control with low latency.

Typical applications: Service robot voice dialogue, fully offline smart assistants, edge AI voice workstations.

4. Comparison of Mainstream Open‑Source Microphone Array Platforms for Secondary Development

To help developers make an informed choice, we compare SISTC‘s platform with other popular open‑source solutions.

| Platform | Mic Config | Host Platform | Interface | Software Ecosystem | Typical Secondary Dev Scenario |

|---|---|---|---|---|---|

| SISTC Open Voice Platform | MEMS array (customizable channels) | ESP32 / USB / I²S | USB / I²S | Native ESP32 support, compatible with mainstream OS audio stacks | Customizable enterprise‑grade secondary development for differentiated products |

| Seeed VoiceCard | 2/4/6/8 MEMS | Raspberry Pi | I²S | Linux ALSA/PulseAudio, kernel driver & config templates | Raspberry Pi embedded voice apps, multi‑source localization |

| ST BlueCoin | 4×MP34DT06J | STM32F446 | I²S / BLE | FP‑AUD‑SMARTMIC1 (beamforming, source localization, echo cancellation) | Sensor fusion voice platform prototyping |

| ReSpeaker XVF3800 | 4/8 MEMS | USB / I²S | USB / I²S | XMOS VocalFusion firmware, far‑field voice capture | Smart speakers, conferencing systems |

| ANAVI Dev Mic | Single MEMS | RP2040 | USB | Open‑source firmware (Apache‑2.0), Raspberry Pi Pico SDK | Open‑source voice hardware experiments, offline STT education |

| Acoular + 16‑ch array | 16 MEMS | PC | USB | Python framework, spatial/frequency filtering, real‑time visualization | Acoustics research, microphone array data acquisition & analysis |

| MATRIX Voice | 7 MEMS | FPGA + ARM | I²S | Supports AWS Alexa, Google Voice API, etc. | Developer voice recognition application creation |

Selection advice:

- Lowest entry barrier & plug‑and‑play? → Seeed VoiceCard (2–8 channels, Linux drivers ready).

- High‑performance AI voice with PC as processing hub? → SISTC USB array + PC ecosystem (full Python stack).

- Customized production solution with embedded Linux? → SISTC + Jetson/Raspberry Pi (full compute flexibility for offline LLMs).

- STM32 expert, sensor fusion and low‑power voice prototyping? → ST BlueCoin (BLE/sensor/MEMS deep integration).

5. From Prototype to Production: The Role of SISTC‘s Standalone Voice Secondary Development Platform

When moving to mass production, developer needs shift from “quick validation“ to “stable, reliable, and cost‑effective.” A standalone voice secondary development platform plays an irreplaceable role – it is both a rapid prototyping tool before final molds and an “acoustic simulation environment“ during product development.

Core value of a standalone voice secondary development platform:

- Acoustic pre‑validation: Before the final enclosure is molded, developers can use the standard platform to validate far‑field pickup distance, beamforming angle, multi‑source separation – catching acoustic issues before mold tooling.

- Rapid algorithm iteration: The platform provides a standardized audio data stream, allowing algorithm teams to test and optimize new voice processing algorithms without waiting for new PCB spins.

- Multi‑scenario configuration validation: Different applications require different channel counts, pickup angles, and noise reduction levels. The flexible platform lets teams validate multiple product variants on the same hardware.

- Smooth migration to production: After acoustic validation and algorithm tuning, SISTC provides technical migration support from the development platform to production modules, ensuring acoustic consistency between prototype and final product.

In a competitive market, a standalone voice secondary development platform has become a strategic tool for shortening R&D cycles and reducing acoustic design risk.

6. Getting Started: Build Your First MEMS Microphone Array Voice System

Below is a universal quick‑start guide. SISTC‘s voice platform natively supports ESP32 and USB/I²S interfaces, so these steps apply to all developers.

Step 1: Hardware Connection

text

SISTC Voice Platform

└── USB interface ──→ Connect to host (PC / Raspberry Pi / Jetson)

OR

└── I²S interface ──→ Connect to ESP32 / STM32 / etc.USB is plug‑and‑play, no driver configuration required – ideal for quick prototyping. I²S provides lower latency, suitable for real‑time production designs.

Step 2: System Recognition

Linux (Raspberry Pi / Jetson):

bash

# List audio devices arecord -l # View recording device info cat /proc/asound/cards

SISTC voice platform appears as a USB audio device and is immediately accessible via ALSA/PulseAudio.

Step 3: Audio Capture Validation

Python script:

python

import pyaudio

import numpy as np

CHUNK = 1024

FORMAT = pyaudio.paInt16

CHANNELS = 4 # Set according to your array

RATE = 16000

p = pyaudio.PyAudio()

stream = p.open(format=FORMAT,

channels=CHANNELS,

rate=RATE,

input=True,

frames_per_buffer=CHUNK)

print("Capturing audio...")

data = stream.read(CHUNK)

audio_data = np.frombuffer(data, dtype=np.int16)

print(f"Captured, shape: {audio_data.shape}")Step 4: Integrate Speech Recognition

Using open‑source Whisper (Raspberry Pi / Jetson):

bash

pip install openai-whisper # Write a Python script to record and transcribe python3 stt.py

Step 5: Algorithm Adaptation & Secondary Development

With a validated audio stream, developers can:

- Integrate beamforming for directional voice enhancement

- Deploy source localization for speaker tracking

- Run edge AI models for keyword wake‑up and command recognition

- Connect large language models (e.g., on Jetson) for end‑side voice AI assistants

7. Frequently Asked Questions

Q1: What is secondary development for microphone arrays?

A: Secondary development means building application‑layer functionality on top of a supplier‘s standard microphone array module and associated software. You don‘t need to redesign the acoustic hardware or implement low‑level algorithms – you focus on voice model adaptation, user experience fine‑tuning, and production details, dramatically shortening time‑to‑market.

Q2: Which host MCUs/SoCs does SISTC’s open‑source voice platform support?

A: SISTC‘s platform natively supports ESP32 and, via USB/I²S interfaces, is compatible with Raspberry Pi, Jetson, STM32, and PC. Choose the best platform for your application.

Q3: What background knowledge is required for secondary development with MEMS microphone arrays?

A: Basic C/Python for embedded development; familiarity with Linux audio stack (ALSA, PulseAudio) or embedded peripheral drivers (I²S, GPIO). An understanding of signal processing and beamforming concepts is helpful but not strictly required – most vendors provide high‑level APIs.

Q4: How long does it take from secondary development to mass production?

A: With mature microphone array modules and open‑source algorithm frameworks, 3–6 months is typical. Most time is spent on algorithm tuning, application iteration, and production testing. SISTC provides migration support to shorten this cycle.

Q5: Can MEMS microphone arrays work reliably in noisy industrial environments?

A: Yes. With beamforming and advanced noise reduction, MEMS arrays can capture clear voice even in loud industrial settings. Proper microphone selection and algorithm optimization are critical – pre‑configured solutions can be provided.

Q6: How can I request samples for secondary development?

A: SISTC offers free samples and technical evaluation. Send an email to denny_tan@sistc.com to apply. Samples help validate product concepts, evaluate acoustic performance, and accelerate prototyping.

8. Secondary Development Resources & Technical Documentation

| Resource | Description |

|---|---|

| SISTC Open Source Voice Platforms | High‑performance modular voice interface integrating MEMS microphone arrays and AI processors |

| SISTC Sensor Module Center | Full list of microphone array modules and acoustic modules with technical specifications |

| ST FP‑AUD‑SMARTMIC1 | STM32Cube function pack with beamforming, source localization, and echo cancellation – ideal for STM32‑based secondary development |

| ST BlueCoin Kit | Complete development platform with 4‑digital MEMS array and STM32F446 for sensor fusion and voice applications |

| Seeed VoiceCard open‑source project | Driver and configuration tools for Raspberry Pi microphone arrays (2–8 channel templates) |

| NVIDIA Jetson AI Voice Assistant Tutorial | Practical guide for building a local AI voice assistant on Jetson with Whisper and camera integration |

Contact for samples & evaluation: denny_tan@sistc.com

9. Conclusion: Secondary Development Enables Agile Smart Voice Product Deployment

MEMS microphone array secondary development is transforming how smart voice products are built. Using mature open‑source voice platforms, development teams gain:

- Dramatically shortened development cycles – from months to weeks, focusing on application innovation rather than foundational acoustics.

- Lower technical barriers – with modular hardware and open‑source code frameworks, even small teams can quickly enter the smart voice space.

- Flexible customization – multi‑host support and scalable software architecture meet differentiated product requirements.

- Seamless path from prototype to production – the standalone voice secondary development platform serves as an acoustic validation and algorithm tuning environment before final molds, reducing productization risk.

Looking ahead, as edge AI voice technology, multi‑modal interaction, and open‑source hardware ecosystems continue to evolve, secondary development will become even more valuable in smart voice product creation. SISTC‘s open‑source voice platform solutions aim to provide efficient support from prototype validation to mass production.

Ready to start your smart voice project? SISTC offers free samples for technical evaluation. Email denny_tan@sistc.com to get your development platform today.

Published: May 9, 2026 | Last updated: May 9, 2026

This article is part of SISTC‘s technical blog series. For questions about MEMS microphone array secondary development, open‑source voice platforms, or sample requests, contact denny_tan@sistc.com.