The wearable landscape is undergoing a seismic shift. While the headlines are dominated by Apple’s “H90” AirPods with integrated infrared cameras and the explosive growth of Meta’s Ray-Ban AI glasses, a critical engineering truth remains overlooked: Vision may provide the context, but audio provides the command.

As we move into the era of “perception-grade” wearables, the synergy between visual sensors and MEMS microphone arrays is what will define the winners of the AI hardware race.

The “AI Camera” Dilemma: Why Audio is the Solution

Recent leaks regarding Apple’s upcoming AI-powered AirPods Pro suggest a move toward low-resolution infrared sensors designed not for photography, but for Siri’s environmental awareness. These devices aim to “see” what you see to provide contextual help. However, this “always-on” visual perception faces three massive hurdles: Power, Heat, and Privacy.

- Power Constraints: Continuous video processing can decimate the 45mAh battery of a standard earbud.

- Thermal Throttling: Processing visual data next to the ear canal creates significant heat dissipation issues.

- Regulatory Walls: The EU AI Act, set for full application by late 2026, imposes strict transparency requirements on devices that monitor public spaces.

The SISTC Advantage: By utilizing low-power MEMS microphones as a “trigger” for visual sensors, developers can save up to 70% in system-level power consumption. Our acoustic sensors act as the “always-alert” sentinel, waking up power-hungry cameras only when specific auditory cues or environmental shifts are detected.

Precision Pickup: The Core of Multi-Modal Interaction

For AI glasses, the challenge is Perspective Synchronization. If a user looks at a menu and asks, “What is the best dish here?”, the AI must align the camera’s field of view with the user’s voice.

At Wuxi Silicon Source Technology (SISTC), our integrated microphone array modules are engineered for:

- Beamforming & SSL: High-precision Sound Source Localization (SSL) ensures the AI ignores background chatter and focuses solely on the wearer’s intent.

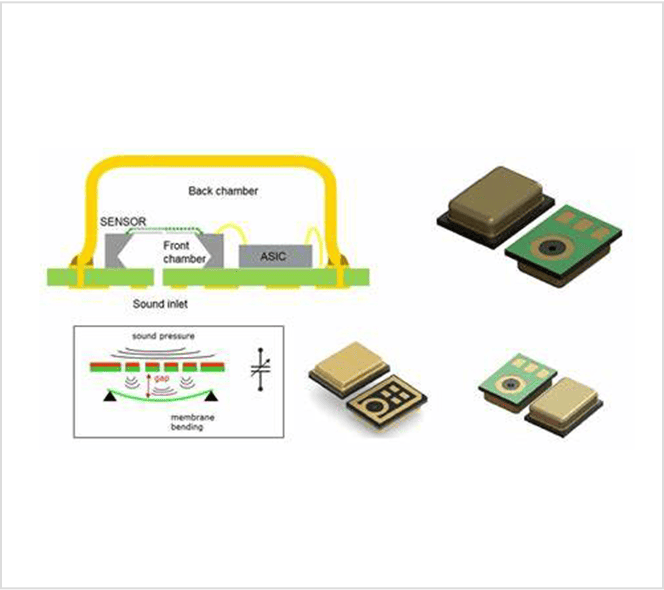

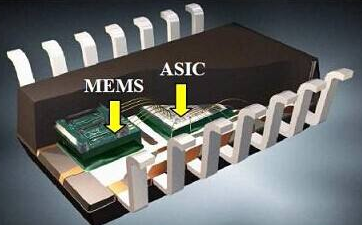

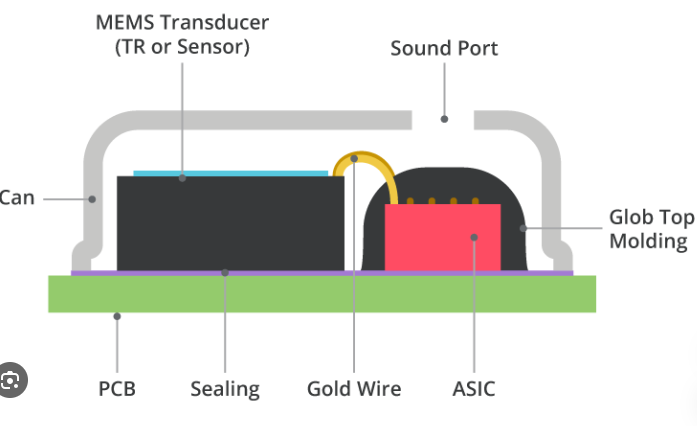

- Extreme Miniaturization: As earbuds and glasses stems grow longer to accommodate cameras, our MEMS MICs maintain an ultra-small footprint, leaving room for essential optics and batteries.

- High SNR for AI Inference: Multimodal models like Google Gemini require clean data. Our sensors deliver a high Signal-to-Noise Ratio (SNR), ensuring that voice commands are processed with zero latency and maximum accuracy.

Navigating the Privacy Landscape

Privacy is no longer just a feature; it’s a legal requirement. The GDPR and the EU AI Act treat data captured in public spaces—even low-resolution infrared—as high-risk.

SISTC’s Edge-Processing Strategy: We support the industry’s shift toward On-Device Processing. Our signal conditioning circuits are designed to interface with the latest edge-AI chips, ensuring that acoustic data is processed locally. This aligns with the “Privacy by Design” philosophy seen in the H90 project, where raw data never leaves the device.

2026 and Beyond: Partner with the Experts

The race to build the ultimate AI wearable is a marathon of engineering compromises. Whether you are developing AI-powered hearing aids, smart glasses, or TWS earbuds, the quality of your audio interface is the ceiling of your AI’s performance.

With 15 years of innovation in MEMS MIC design and noise reduction algorithms, SISTC is ready to help you bridge the gap between human intent and machine intelligence.

Explore our latest product lineup: SISTC Product Catalog

Stay updated on AI hardware trends: Follow the MIT Technology Review AI section for the latest on wearable evolution.